Deploy Fusion at Scale

- Prerequisites

- Custom values YAML file

- Configure Solr sizing

- Configure multiple node pools

- Solr auto-scaling policy

- Pod network policy

- On-premises private Docker registries

- Add additional trusted certificate(s) for connectors to allow crawling of web resources with SSL/TLS enabled (optional)

- Install Fusion 5 on Kubernetes

- Monitoring Fusion with Prometheus and Grafana

Before you begin, see Fusion Server Deployment to understand the architecture and requirements.

This article explains how to plan and execute a Fusion deployment at the scale required for staging or production.

While the setup_f5_*.sh scripts are handy for getting started and proof-of-concept purposes, this article covers the planning process for building a production-ready environment.

Prerequisites

You must meet the following prerequisites before you can customize your Fusion cluster:

-

A local copy of the fusion-cloud-native repository. This must be up-to-date with the latest master branch.

-

Any cloud provider-specific command line tools, such as

gcloudoraws, andkubectl.See the platform-specific instructions linked above, or check with your cloud provider.

-

Helm v3

-

To install on a Mac:

brew upgrade kubernetes-helm -

For other operating systems, download from Helm Releases.

-

Verify your installation:

helm version --short v3.0.0+ge29ce2a

-

-

Kubernetes namespace

-

Collect the following information about your Kubernetes environment:

-

CLUSTER: Cluster name (passed to our setup scripts using the

-carg) -

NAMESPACE: Kubernetes namespace where to install Fusion; a namespace should only contain lowercase letters (a-z), digits (0-9), or dash. No periods or underscores allowed.

-

-

-

(optional) Clarify your organization’s DockerHub policy. The Fusion Helm chart points to public Docker images on DockerHub. Your organization may not allow Kubernetes to pull images directly from DockerHub or may require extra security scanning before loading images into production clusters.

Consult your Kubernetes and Docker admin team to find how to get the Fusion images loaded into a registry that’s accessible to your cluster. You can update the image for each service using the custom values YAML file.

|

Kubernetes namespace tips

|

Custom values YAML file

-

Clone the

fusion-cloud-nativerepository:git clone https://github.com/lucidworks/fusion-cloud-native -

Run the

customize_fusion_values.shscript../customize_fusion_values.sh --provider <provider> -c <cluster> -n <namespace> \ --num-solr 3 \ --solr-disk-gb 100 \ --node-pool <node_selector> \ --prometheus true \ --with-resource-limits \ --with-affinity-rulesPass the --helpparameter to see script usage details.The script creates the following files:

File Description <provider>_<cluster>_<namespace>_fusion_values.yamlMain custom values YAML used to override Helm chart defaults for Fusion microservices.

<provider>_<cluster>_<namespace>_monitoring_values.yamlCustom values yaml used to configure Prometheus and Grafana.

<provider>_<cluster>_<namespace>_fusion_resources.yamlResource requests and limits for all Microservices.

<provider>_<cluster>_<namespace>_fusion_affinity.yamlPod affinity rules to ensure mulitple replicas for a single service are evenly distributed across zones and nodes.

<provider>_<cluster>_<namespace>_upgrade_fusion.shScript used to install and/or upgrade Fusion using the aforementioned custom values YAML files.

For an explanation of these placeholder values, see Configuration Values below.

-

Add the new files to version control. You will make changes to it over time as you fine-tune your Fusion installation. You will also need it to perform upgrades. If you try to upgrade your Fusion installation and don’t provide the custom values YAML, your deployment will revert to chart defaults.

Review the <provider>_<cluster>_<release>_fusion_values.yaml file to familiarize yourself with its structure and contents. Notice it contains a separate section for each of the Fusion microservices. The example configuration of the query-pipeline service below illustrates some important concepts about the custom values YAML file.

query-pipeline: (1)

enabled: true (2)

nodeSelector: (3)

cloud.google.com/gke-nodepool: default-pool

javaToolOptions: "..." (4)

pod: (5)

annotations:

prometheus.io/port: "8787"

prometheus.io/scrape: "true"

prometheus.io/path: "/actuator/prometheus"| 1 | Service-specific setting overrides under the top-level heading |

| 2 | Every Fusion service has an implicit enabled flag that defaults to true, set to false to remove this service from your cluster |

| 3 | Node selector identifies the label find nodes to schedule pods on |

| 4 | Used to pass JVM options to the service |

| 5 | Pod annotations to allow Prometheus to scrape metrics from the service |

Once we go through all of the configuration topics in this topic, you’ll have a well-configured custom values YAML file for your Fusion 5 installation. You’ll then use this file during the Helm v3 installation at the end of this topic.

Deployment-specific values

The script creates a custom values YAML file using the naming convention: <provider>_<cluster>_<namespace>_fusion_values.yaml. For example, gke_search_f5_fusion_values.yaml.

| Parameter | Description |

|---|---|

|

The K8s platform you’re running on, such as |

|

The name of your cluster. |

|

The K8s namespace where you want to install Fusion. |

|

Specifies a |

Providing the correct --node-pool <node_selector> label is very important. Using the wrong value will cause your pods to be stuck in the pending state. If you’re not sure about the correct value for your cluster, pass '{}' to let Kubernetes decide which nodes to schedule Fusion pods on.

|

Default nodeSelector labels are provider-specific. The fusion-cloud-native scripts use the following defaults for GKE and EKS:

| Provider | Default node selector |

|---|---|

GKE |

cloud.google.com/gke-nodepool: default-pool |

EKS |

alpha.eksctl.io/nodegroup-name: standard-workers |

Flags

The script provides flags for additional configuration:

| Flag | Description |

|---|---|

|

Add a Fusion specific label to your nodes. |

|

Configure resource requests/limits. |

|

Configure replica counts. |

|

Configure pod affinity rules for Fusion services. |

Use --node-pool to add a Fusion specific label to your nodes by doing:

kubectl label <NODE_ID> fusion_node_type=<NODE_LABEL>Then, pass --node-pool 'fusion_node_type: <NODE_LABEL>'.

Configure Solr sizing

When you’re ready to build a production-ready setup for Fusion 5, you need to customize the Fusion Helm chart to ensure Fusion is well-configured for production workloads.

You’ll be able to scale the number of nodes for Solr up and down after building the cluster, but you need to establish the initial size of the nodes (memory and CPU) and the size and type of disks you need.

See the example config below to learn which parameters to change in the custom values YAML file.

solr:

resources: # Set resource limits for Solr to help K8s pod scheduling;

limits: # these limits are not just for the Solr process in the pod,

cpu: "7700m" # so allow ample memory for loading index files into the OS cache (mmap)

memory: "26Gi"

requests:

cpu: "7000m"

memory: "25Gi"

logLevel: WARN

nodeSelector:

fusion_node_type: search # Run this Solr StatefulSet in the "search" node pool

exporter:

enabled: true # Enable the Solr metrics exporter (for Prometheus) and

# schedule on the default node pool (system partition)

podAnnotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9983"

prometheus.io/path: "/metrics"

nodeSelector:

cloud.google.com/gke-nodepool: default-pool

image:

tag: 8.4.1

updateStrategy:

type: "RollingUpdate"

javaMem: "-Xmx3g -Dfusion_node_type=system" # Configure memory settings for Solr

solrGcTune: "-XX:+UseG1GC -XX:-OmitStackTraceInFastThrow -XX:+UseStringDeduplication -XX:+PerfDisableSharedMem -XX:+ParallelRefProcEnabled -XX:MaxGCPauseMillis=150 -XX:+UseLargePages -XX:+AlwaysPreTouch"

volumeClaimTemplates:

storageSize: "100Gi" # Size of the Solr disk

replicaCount: 6 # Number of Solr pods to run in this StatefulSet

zookeeper:

nodeSelector:

cloud.google.com/gke-nodepool: default-pool

replicaCount: 3 # Number of Zookeepers

persistence:

size: 20Gi

resources: {}

env:

ZK_HEAP_SIZE: 1G

ZOO_AUTOPURGE_PURGEINTERVAL: 1To be clear, you can tune GC settings and number of replicas after the cluster is built. But changing the size of the persistent volumes is more complicated so you should try to pick a good size initially.

Configure storage class for Solr pods (optional)

If you wish to run with a storage class other than the default you can create a storage class for your Solr pods before you install. For example, to create regional disks in GCP you can create a file called storageClass.yaml with the following contents:

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: solr-gke-storage-regional

provisioner: kubernetes.io/gce-pd

parameters:

type: pd-standard

replication-type: regional-pd

zones: us-west1-b, us-west1-cand then provision into your cluster by calling:

kubectl apply -f storageClass.yamlto then have Solr use the storage class by adding the following to the custom values YAML:

solr:

volumeClaimTemplates:

storageClassName: solr-gke-storage-regional

storageSize: 250Gi| We’re not advocating that you must use regional disks for Solr storage, as that would be redundant with Solr replication. We’re just using this as an example of how to configure a custom storage class for Solr disks if you see the need. For instance, you could use regional disks without Solr replication for write-heavy type collections. |

Configure multiple node pools

Lucidworks recommends isolating search workloads from analytics workloads using multiple node pools. The included scripts do not do this for you; this is a manual process.

See the example script for GKE, see create_gke_cluster_node_pools.sh.

In the custom values YAML file, you can add additional Solr StatefulSets by adding their names to the list under the nodePools property. If any property for that statefulset needs to be changed from the default set of values, then it can be set directly on the object representing the node pool, any properties that are omitted are defaulted to the base value. See the following example (additional whitespace added for display purposes only):

solr:

nodePools:

- name: "" (1)

- name: "analytics" (2)

javaMem: "-Xmx6g"

replicaCount: 6

storageSize: "100Gi"

nodeSelector:

fusion_node_type: analytics (3)

resources:

requests:

cpu: 2

memory: 12Gi

limits:

cpu: 3

memory: 12Gi

- name: "search" (4)

javaMem: "-Xms11g -Xmx11g"

replicaCount: 12

storageSize: "50Gi"

nodeSelector:

fusion_node_type: search (5)

resources:

limits:

cpu: "7700m"

memory: "26Gi"

requests:

cpu: "7000m"

memory: "25Gi"

nodeSelector:

cloud.google.com/gke-nodepool: default-pool (6)

...| 1 | The empty string "" is the suffix for the default partition. |

| 2 | Overrides the settings for the analytics Solr pods. |

| 3 | Assigns the analytics Solr pods to the node pool and attaches the label fusion_node_type=analytics. You can use the fusion_node_type property in Solr auto-scaling policies to govern replica placement during collection creation. |

| 4 | Overrides the settings for the search Solr pods |

| 5 | Assigns the search Solr pods to the node pool and attaches the label fusion_node_type=search. |

| 6 | Sets the default settings for all Solr pods, if not specifically overridden in the nodePools section above. |

Do not edit the nodePools value "".

|

In the example above, the analytics partition replicaCount, or number of Solr pods, is six. The search partition replicaCount is twelve.

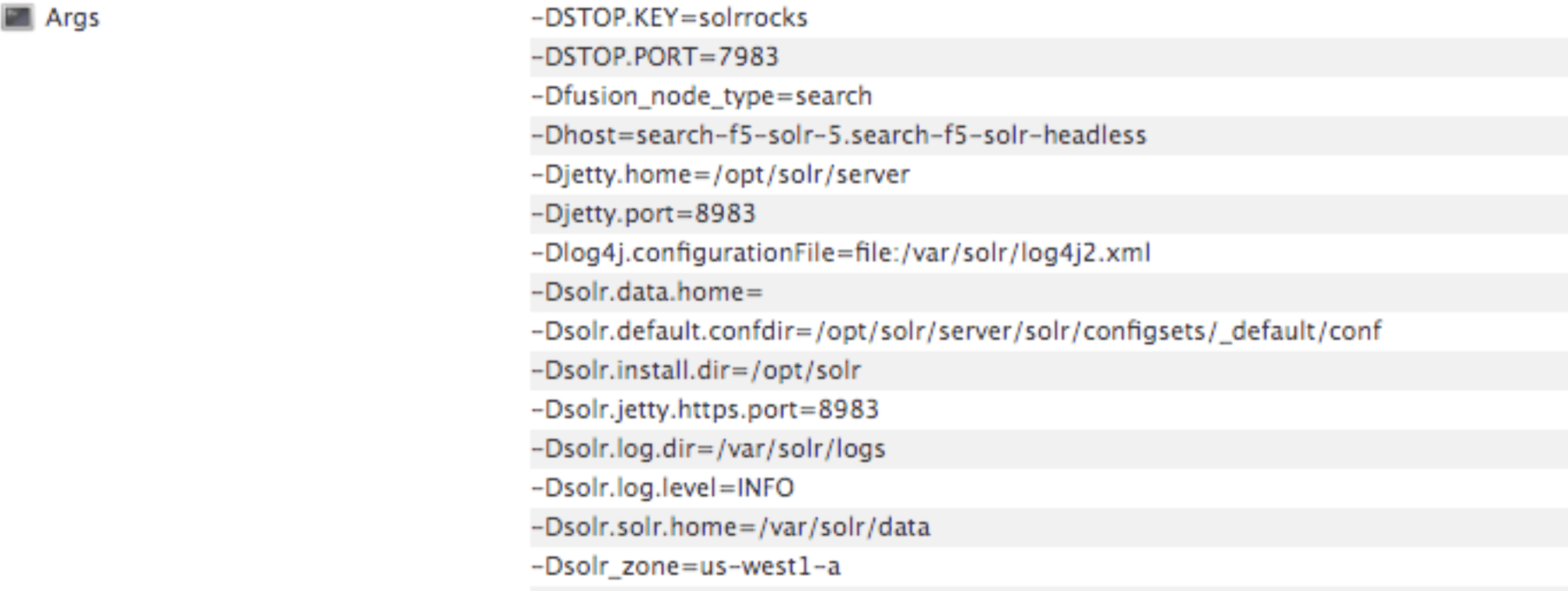

Each nodePool is automatically be assigned the -Dfusion_node_type property of <search>, <system>, or <analytics>. This value matches the name of the nodePool. For example, -Dfusion_node_type=<search>.

The Solr pods have a fusion_node_type system property, as shown below:

Solr auto-scaling policy

You can configure a custom Solr auto-scaling policy in the custom values YAML file under the fusion-admin section, as shown below:

fusion-admin:

...

solrAutocalingPolicyJson:

{

"set-cluster-policy": [

{"node": "#ANY", "shard": "#EACH", "replica":"<2"},

{"replica": "#EQUAL", "sysprop.solr_zone": "#EACH", "strict" : false}

]

}You can use an auto-scaling policy to govern how the shards and replicas for Fusion system and application-specific collections are configured. If your cluster defines the search, analytics, and system node pools, use the policy.json file provided in the fusion-cloud-native repo as a starting point. The Fusion Admin service applies the policy from the custom values YAML file to Solr, before creating system collections during initialization.

Pod network policy

A Kubernetes network policy governs how groups of pods communicate with each other and other network endpoints. With Fusion, all incoming traffic flows through the API Gateway service. All Fusion services in the same namespace expect an internal JWT, which is supplied by the Gateway, as part of the request. As a result, Fusion services enforce a basic level of API security and don’t need an additional network policy to protect them from other pods in the cluster.

To install the network policy for Fusion services, pass --set global.networkPolicyEnabled=true when installing the Fusion Helm chart.

On-premises private Docker registries

For on-premises Kubernetes deployments, your organization may not allow Kubernetes to pull Fusion’s Docker images from DockerHub. See the instructions below for details on using a private Docker registry with Fusion 5.1.1. These are general instructions that may need to be adapted to work within your organization’s security policies:

-

Transfer the public images from DockerHub to your private Docker registry.

-

Establish a workstation that has access to DockerHub. This workstation must connect to your internal Docker registry, most likely via VPN connection. In this example, the workstation is referred to as

envoy. -

Install Docker on

envoy. You need at least 100GB of free disk for Docker. -

Pull all of the images from DockerHub to

envoy’s local registry. For example, to pull the query pipeline image, rundocker pull lucidworks/query-pipeline:5.1.1. This will take a long time. See the list of the images required below to pull for the Fusion 5.1.1 chart:apachepulsar/pulsar-all:2.5.0 argoproj/argoui:v2.4.3 argoproj/workflow-controller:v2.4.3 bitnami/kubectl:1.15-debian-9 busybox:1.31.1 busybox:latest curlimages/curl:7.68.0 docker.io/seldonio/seldon-core-operator:1.0.1 grafana/grafana:6.6.2 jimmidyson/configmap-reload:v0.3.0 lucidworks/admin-ui:5.1.1 lucidworks/api-gateway:5.1.1 lucidworks/auth-ui:5.1.0 lucidworks/classic-rest-service:5.1.1 lucidworks/devops-ui:5.1.0 lucidworks/fusion-api:5.1.1 lucidworks/fusion-indexing:5.1.1 lucidworks/fusion-logstash:5.1.0 lucidworks/insights:5.1.0 lucidworks/job-launcher:5.1.1 lucidworks/job-rest-server:5.1.1 lucidworks/ml-model-service:5.1.0 lucidworks/ml-python-image:5.1.0 lucidworks/pm-ui:5.1.0 lucidworks/query-pipeline:5.1.1 lucidworks/rest-service:5.1.1 lucidworks/rpc-service:5.1.1 lucidworks/rules-ui:5.1.0 lucidworks/webapps:5.1.0 prom/prometheus:v2.16.0 prom/pushgateway:v1.0.1 quay.io/coreos/kube-state-metrics:v1.9.5 quay.io/datawire/ambassador:0.86.1 seldonio/seldon-core-operator:1.0.1 solr:8.4.1 zookeeper:3.5.6See

docker pull --helpfor more information about pulling Docker images. -

Establish a connection from

envoyto the private Docker registry, most likely via a VPN connection. In this example, the private Docker registry is referred to as<internal-private-registry>. -

Push the images from

envoy’s Docker registry to the private registry. This will take a long time.-

You’ll need to re-tag all images for the internal registry. For example, to tag the query-pipeline image, run:

docker tag lucidworks/query-pipeline:5.1.1 <internal-private-registry>/query-pipeline:5.1.1 -

Push each image to the internal repo:

docker push <internal-private-registry>/query-pipeline:5.1.1

-

-

Install the Docker registry secret in Kubernetes. Create the Docker registry secret in the Kubernetes namespace where you want to install Fusion:

SECRET_NAME=<internal-private-secret> REPO=<internal-private-registry> kubectl create secret docker-registry "${SECRET_NAME}" \ --namespace "${NAMESPACE}" \ --docker-server="${REPO}" \ --docker-username=${REPO_USER} \ --docker-password=${REPO_PASS} \ --docker-email=${REPO_USER}For details, see the Kubernetes article Pull an Image from a Private Registry.

-

Update the custom values YAML for your cluster to point to your private registry and secret to allow Kubernetes to pull images. For example:

query-pipeline: image: imagePullSecrets: - name: <internal-private-secret> repository: <internal-private-registry>Repeat the process for all Fusion services.

Customize Helm Chart

Every Fusion service allows you to override the imagePullSecrets setting using custom values YAML. However, other 3rd party services—including Zookeeper, Pulsar, Prometheus, and Grafana—don’t allow you to supply the pull secret using the custom values YAML.

To patch the default service account for your namespace and add the pull secret, run the following:

kubectl patch sa default -n $NAMESPACE \

-p '"imagePullSecrets": [{"name": "<internal-private-secret>" }]'

Replace <internal-private-secret> with the name of the secret you created in the steps above.

|

This allows the default service account to pull images from the private registry without specifying the pull secret on the resources directly.

Add additional trusted certificate(s) for connectors to allow crawling of web resources with SSL/TLS enabled (optional)

To crawl a datasource which for some reason is using a self-signed certificate, add arbitrary certificates to connectors. For example:

Fusion 5.3x, 5.2x, and 5.1x

classic-rest-service:

trustedCertificates:

enabled: true

files:

some.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE-----

other.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE---------

connector-plugin-service:

trustedCertificates:

enabled: true

files:

some.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE-----

other.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE---------Fusion 5.4 and later

classic-rest-service:

trustedCertificates:

enabled: true

files:

some.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE-----

other.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE---------

connector-plugin:

trustedCertificates:

enabled: true

files:

some.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE-----

other.cert: |-

-----BEGIN CERTIFICATE-----

MIIDeTCCAmGgAwIBAgIJAPziuikCTox4MA0GCSqGSIb3DQEBCwUAMGIxCzAJBgNV

(...)

EVA0pmzIzgBg+JIe3PdRy27T0asgQW/F4TY61Yk=

-----END CERTIFICATE---------Generating the certificate on linux command line

Use the following command to generate a .crt file in $fusion_home/apps/jetty/connectors/etc/yourcertname.crt:

openssl s_client -servername remote.server.net -connect remote.server.net:443 </dev/null | sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' >$fusion_home/apps/jetty/connectors/etc/yourcertname.crt

Generating the certificate using Firefox web browser

-

Navigate to the Sharepoint host.

-

Click the

in the address bar, then click the

in the address bar, then click the  icon.

icon. -

Next, navigate to More Information > View Certificate > Export.

Save the file to the following folder:

$fusion_home/apps/jetty/connectors/etc/yourcertname.crt

Generating the certificate using Chrome web browser

-

Navigate to Chrome menu > More Tools > Developer Tools > Security Tab.

This will display the Security overview.

-

Click the View certificate button.

-

Save the file to the following folder:

$fusion_home/apps/jetty/connectors/etc/yourcertname.crt

Generating the certificate using powershell

Use the following script to generate a `.crt file in `$fusion_home\apps\jetty\connectors\etc\yourcertname.crt:

$fusion_home = c:\your\fusion\install\directory

$webRequest = [Net.WebRequest]::Create("https://your-hostname")

try { $webRequest.GetResponse() } catch {}

$cert = $webRequest.ServicePoint.Certificate

$bytes = $cert.Export([Security.Cryptography.X509Certificates.X509ContentType]::Cert)

set-content -value $bytes -encoding byte -path "$fusion_home\apps\jetty\connectors\etc\yourcertname.binary.crt"

certutil -encode "$fusion_home\apps\jetty\connectors\etc\yourcertname.binary.crt" "$fusion_home\apps\jetty\connectors\etc\yourcertname.crt"

rm "$fusion_home\apps\jetty\connectors\etc\yourcertname.binary.crt" -fInstall Fusion 5 on Kubernetes

At this point, you’re ready to install Fusion 5 using the custom values YAML files and upgrade script. If you used the customize_fusion_values.sh script, run it using BASH:

./gke_search_f5_upgrade_fusion.shOnce the installation is complete, verify your Fusion installation is running correctly.

Monitoring Fusion with Prometheus and Grafana

Lucidworks recommends using Prometheus and Grafana for monitoring the performance and health of your Fusion cluster. Your operations team may already have these services installed. If not, install them into the Fusion namespace.

| The Custom values YAML file shown above activates the Solr metrics exporter service and adds pod annotations so Prometheus can scrape metrics from Fusion services. |

-

Run the

customize_fusion_values.shscript with the--prometheus trueoption. This creates an extra custom values YAML file for installing Prometheus and Grafana,<provider>_<cluster>_<namespace>_monitoring_values.yaml. For example:gke_search_f5_monitoring_values.yaml. -

Commit the YAML file to version control.

-

Review its contents to ensure that the settings suit your needs. For example, decide how long you want to keep metrics. The default is 36 hours.

See the Prometheus documentation and Grafana documentation for details.

-

Run the

install_prom.shscript to install Prometheus & Grafana in your namespace. Include the provider, cluster name, namespace, and helm release as in the example below:./install_prom.sh --provider gke -c search -n f5 -r 5-5-1Pass the --helpparameter to see script usage details.

The Grafana dashboards from monitoring/grafana are installed automatically by the install_prom.sh script.