Automatically Classify New Queries

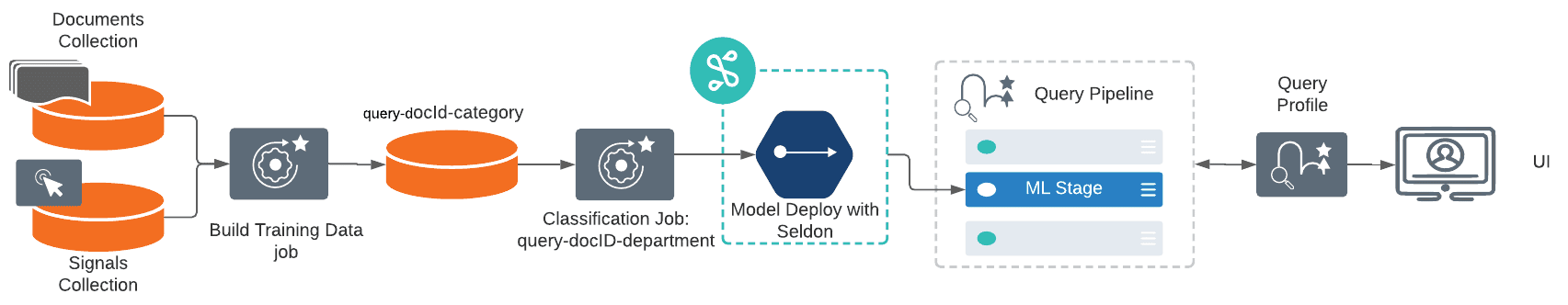

You can predict the categories most likely to satisfy a new query using this workflow:

-

Use the Build Training Data job to join your signals data with your catalog data and produce training data in the form of query/class pairs.

-

Use the Classification job to train a classification model using the output collection of the Build Training Data job as the training collection.

See the detailed steps below.

To predict the categories of new queries

-

Navigate to Collections > Jobs > Add+ > Build Training Data to create a new Build Training Data job.

-

Configure the job as follows:

-

In the Catalog Path field, enter the collection name or cloud storage path where your main content is stored.

-

In the Catalog Format field, enter

solrif you are analyzing a Solr collection, or another format if your content is in the cloud. -

In the Signals Path field, enter the collection name or cloud storage path where your signals data is stored.

-

In the Output Path field, enter the collection name or cloud storage path where you want to store the training data.

-

In the Category Field in Catalog field, enter the field name for the category data in your main content.

-

In the Item ID Field in Catalog field, enter the field name for the item IDs in your main content.

-

Check that the values of Item ID Field in Signals and Count Field in Signals match the field names in your signals data.

-

-

Save the job.

-

Click Run > Start to run the job.

-

Navigate to Collections > Jobs > Add+ > Classification to create a new Classification job.

-

Configure the job as follows:

-

In the Model Deployment Name field, enter an ID for the new classification model.

-

In the Training Data Path field, enter the collection name or cloud storage path from the Build Training Data job’s Output Path field.

-

In the Training Data Format field, leave the default

solrvalue if the Training Data Path is a collection or if you used the default format in your Build Training Data job configuration.If you configured the Build Training Data job to output a different format, enter it here.

-

In the Training collection content field, enter

query_s, the default content field name in the Build Training Data job’s output. -

In the Training collection class field, enter

category_s, the default category field name in the Build Training Data job’s output.For additional configuration details, see Best practices below.

-

-

Save the job.

-

Verify that the Build Training Data job has finished successfully.

-

Click Run > Start to run the job.

-

Navigate to Indexing > Query Workbench > Load and select your query pipeline.

-

Configure the query pipeline as follows:

-

Add a new Machine Learning stage.

-

In the Model ID field, enter the name from the Classification job’s Model Deployment Name field.

-

In the Model input transformation script field, enter the following:

var modelInput = new java.util.HashMap() modelInput.put("text", request.getFirstParam("q")) modelInput -

In the Model output transformation script field, enter the following:

// In case if top_k_predictions are needed // To put into response documents (can be done only after Solr Query stage) var jsonOutput = JSON.parse(modelOutput.get("_rawJsonResponse")) var parsedOutput = {}; for (var i=0; i<jsonOutput["names"].length;i++){ parsedOutput[jsonOutput["names"][i]] = jsonOutput["ndarray"][i] } var docs = response.get().getInnerResponse().getDocuments(); var ndocs = new java.util.ArrayList(); for (var i=0; i<docs.length;i++){ var doc = docs[i]; doc.putField("top_1_class", parsedOutput["top_1_class"][0]) doc.putField("top_1_score", parsedOutput["top_1_score"][0]) if ("top_k_classes" in parsedOutput) { doc.putField("top_k_classes", new java.util.ArrayList(parsedOutput["top_k_classes"][0])) doc.putField("top_k_scores", new java.util.ArrayList(parsedOutput["top_k_scores"][0])) } ndocs.add(doc); } response.get().getInnerResponse().updateDocuments(ndocs); -

Click Apply.

-

-

Save the query pipeline.

Custom output transformation script examples

// To put into request

request.putSingleParam("class", modelOutput.get("top_1_class")[0])

request.putSingleParam("score", modelOutput.get("top_1_score")[0])

// Or for example to apply Filter Query

request.putSingleParam("fq", "class:" + modelOutput.get("top_1_class")[0])// To put into query context

context.put("class", modelOutput.get("top_1_class")[0])

context.put("score", modelOutput.get("top_1_score")[0])// To put into response documents (can be done only after Solr Query stage)

var docs = response.get().getInnerResponse().getDocuments();

var ndocs = new java.util.ArrayList();

for (var i=0; i<docs.length;i++){

var doc = docs[i];

doc.putField("query_class", modelOutput.get("top_1_class")[0])

doc.putField("query_score", modelOutput.get("top_1_score")[0])

ndocs.add(doc);

}

response.get().getInnerResponse().updateDocuments(ndocs);Best practices for configuring the Classification job

This job analyzes how your existing documents are categorized and produces a classification model that can be used to predict the categories of new documents at index time.

For detailed configuration instructions and examples, see Classify New Documents at Index Time or Classify New Queries.

This job takes raw text and an associated single class as input. Although it trains on single classes, there is an option to predict the top several classes with their scores.

At a minimum, you must configure these:

-

An ID for this job

-

A Method; Logistic Regression is the default

-

A Model Deployment Name

-

The Training Collection

-

The Training collection content field, the document field containing the raw text

-

The Training collection class field containing the classes, labels, or other category data for the text